Contents

SentiNet (link) PyTorch implementation(NOTE: Unofficial): Github repo: https://github.com/CassiniHuy/trojan-attacks-and-defenses

Feel free to point out possible implementation errors.

How SentiNet works?

SentiNet tries to locate the localized adversarial patches (including backdoor triggers) using GradCAM. It aims to extract these impactful patches from adversarial images and overlays them on some benign test images. So:

- If the extracted patches are malicious, they can mislead the model on these benign images.

- If the extracted patches are benign, they cannot probably mislead the model.

Therefore, SentiNet tries to differentiate benign and adversarial examples by their different distributions on the above behavious. The paper gives easy-to-understand details and you can refer to it: https://arxiv.org/abs/1812.00292

Code Usage

A example about how to run

1 | import torch, os |

Configuration:

You can find the config class in the file defense/sentinet.py:

1 | GAUSSIAN_NOISE = 1 |

Output

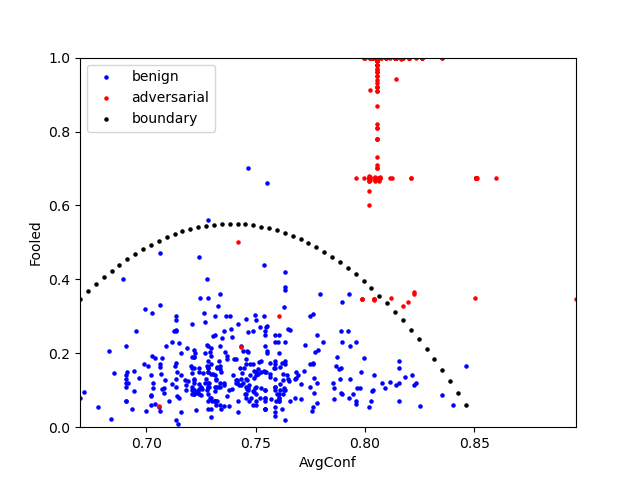

The sentinet.detect method will output 3 files (see samples/sentinet) and 2 files containing the results: sentinet-results.json and sentinet-results.png. The sentinet-results.json containing all detailed results such as the curve function weights, training/testing points. The sentinet-results.png plots the curve and benign/adv points. It looks like the following example (running for the badnets attack.):